· Yogesh Mali · programming · 6 min read

Agentic Patterns

With AI, there are rapid changes on how we build our products. AI Agents or Agentic AI serve a similar purpose. How do you really design a reliable and robust agentic system?

In this post, I will cover different patterns that you can use to build agentic system. One key distinction about agentic system compared to regular distributed system is that agents have a feedback loop and they can course correct their action. On the other hand, distributed system can be more deterministic despite number of moving parts.

Introduction

Before, we dive into different patterns, it is critical to know when to use these patterns and why to use.

Why to use

When we build distributed systems, we use system design patterns to build the system. The patterns help us recognize system and build systemic thinking. When you are given a vague problem, the engineer equipped with system design patterns (OR Agentic Patterns) quickly recognizes what pattern to use.

When to use Agentic Patterns?

Considering everyone wants to build AI products, it is becoming a standard to use agentic patterns. But there are scenarios when you should not use these agentic patterns.

- If a simple solution can solve the problem, then you don’t need agentic pattern.

- Agentic system can increase complexity of the system, make sure you are aware of the risks.

- When you need a deterministic approach to the problem, then do not use agentic patterns.

- When there are multiple sources of input and you need to produce the output based on these sources. Use agentic pattern in such scenario. A good example for this is insurance claim review system. Health/Car insurance needs a lot of different type of information before it can make a decision of a claim.

- When latency of the system is not the goal, but the accuracy. And this accuracy can be for a complex and dynamic task.

Patterns

I will try to cover the following patterns

- Prompt Chaining

- Routing

- Parallelization

- Reflection Pattern

- Tool Pattern

- Orchestrator Pattern

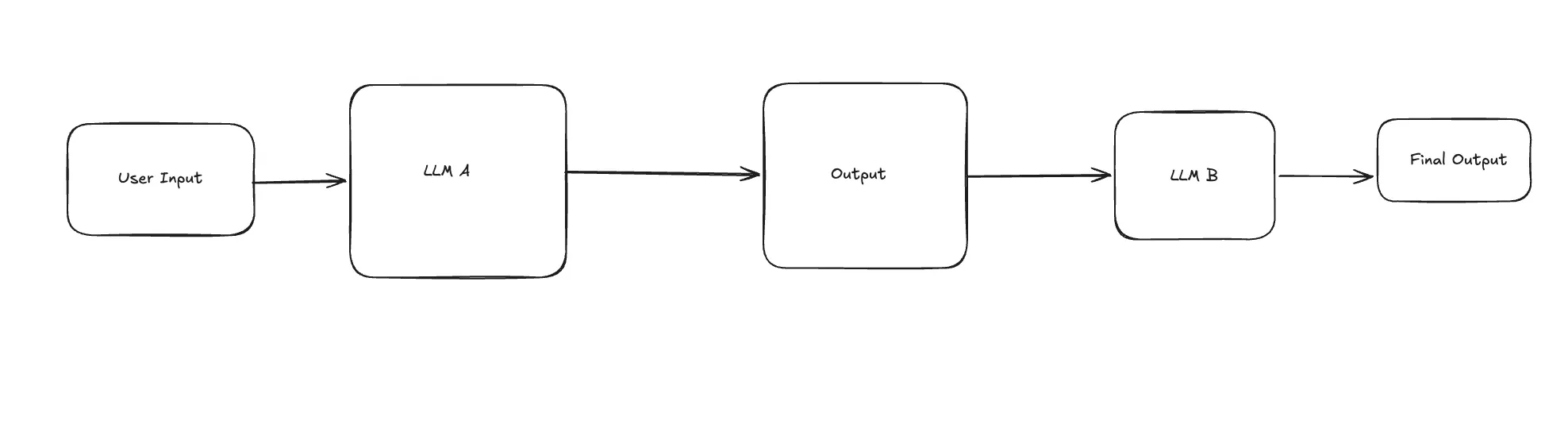

Prompt Chaining

This one is the easiest pattern to understand. And all the tools like Claude Code, Codex use this pattern in some capacity.

The above diagram shows that user enters an input to first LLM and LLM produces an output. This output is then fed to another LLM which produces the final result. This pattern breaks down a complex task in multiple sequential steps.

A good example of this is Codex. User asks Codex to do something. Codex uses user input to call model and model responds either with another prompt OR tool call. The result of tool call OR response for the prompt is then used to combine the entire prompt. The entire prompt includes different layers of prompts from system prompt to tool output, to user input. Eventually, it will produce final result.

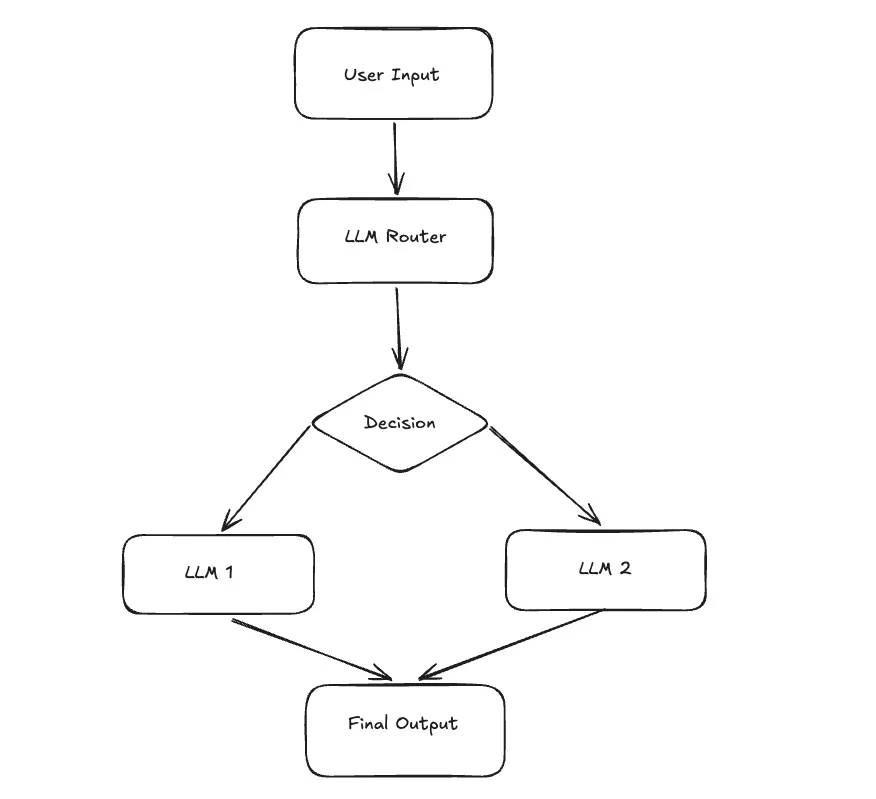

Routing

In this pattern, there are multiple LLMs involved. The initial LLM acts as a router for the given user input. It validates the user input and breaks down the given query in specialized form. It then routes broken down task to respective LLM with specialized prompts. When a task is routed, the selected agent takes over to complete that task. This patterns improves efficiency and reduces cost by using smaller models for specialized task.

If you have used Claude Code as a CLI tool with Claude Opus for planning - it will first plan the task and break down the task in multiple substeps. And when it implements it can route those tasks to Claude Sonner or Haiku models.

Similarly, in car insurance - when insurance companies have to handle the task for processing claims, they can pass all the information about claims to one model and images to another model. A router can do this division of tasks.

Another good use case is Customer Support AI assistants. These AI assistants receive the query from user and it is fed to LLM router that then figures out which specialized LLM it needs to route to.

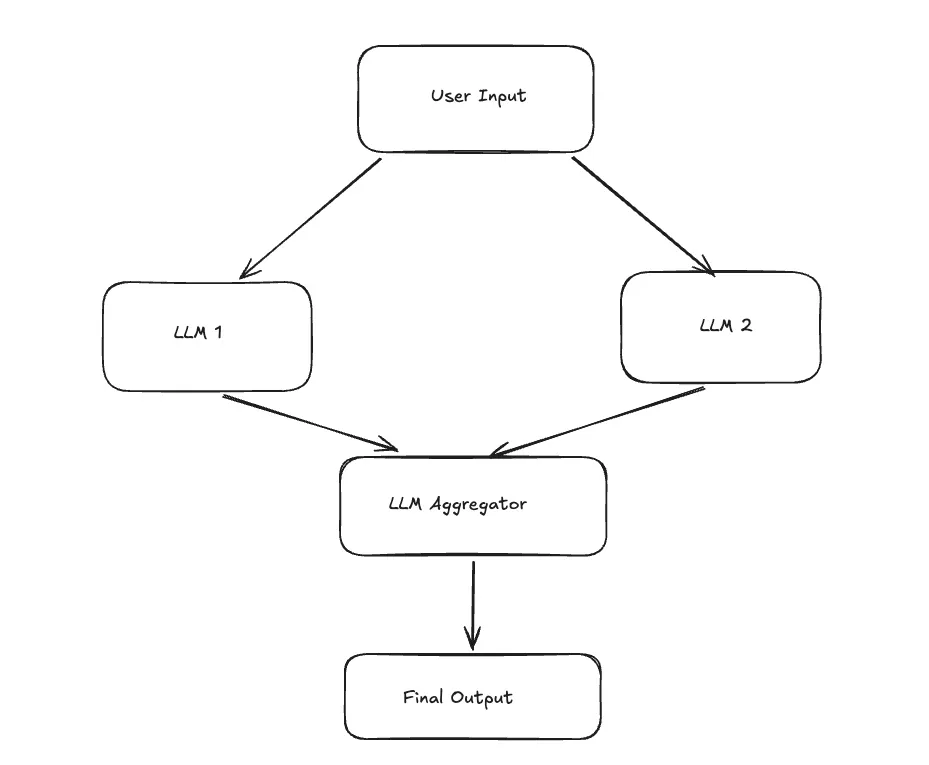

Parallelization

Similar to Routing pattern, parallelization can be a pattern with multiple LLMs involved. A complex task is broken down into multiple independent tasks. Two (or multiple) LLMs handle these independent tasks in parallel. The output from each LLM is then fed to LLM aggregator. LLM aggregator then processes each output and synthesizes all those outputs in a single output. The major advantage of this pattern is that it can improve latency since independent tasks are handled in parallel.

When you want to analyze large complex documents, this pattern can follow map-reduce style approach. Another good use case would be when you want to get a consensus on certain task from different personas. When designing a good user interface, you can opinion from UX designer, UX researcher, behavioral psychologist. All those different perspectives (from different LLM personas) can help to build right user interface.

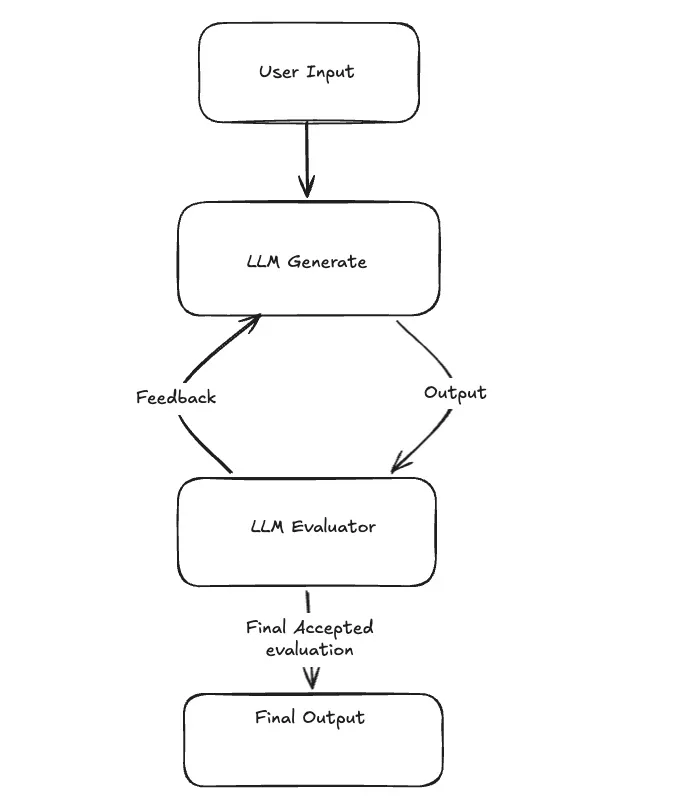

Reflection Pattern

Reflection pattern is the first true feedback loop pattern where the agent uses self-correction loop. User submits input and it is fed to LLM. LLM generates an output. This output is then submitted to same LLM for evaluation-critique. Based on the evaluation feedback, it is submitted to the same earlier LLM to produce adjusted output. The loop can continue till evaluator confirms the requirements are met. Eventually, final output is produced.

The prompt for evaluation will usually be different prompt compared to the first prompt. Both the initial LLM and the evaluator LLM can use the same model.

The biggest use case for this pattern is seen in code generation. The tools that currently help with code generation - starts implementing based on user input, but as they execute commands, they notice errors or test failures and use that feedback to self-correct their generated code.

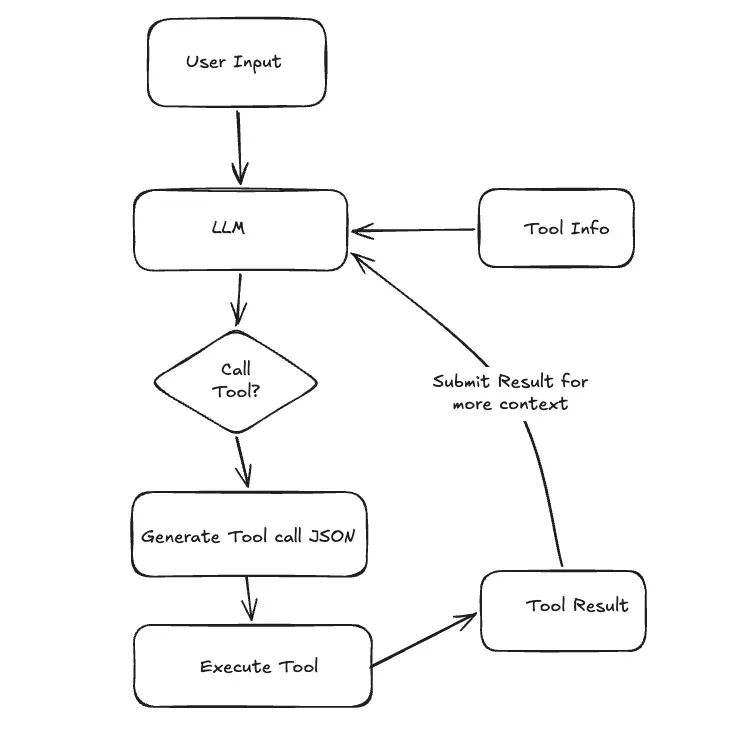

Tool Pattern

Tool Pattern is also known as Function Call Execution. This might be the most used pattern in agentic system. Tools allow agent to find the context outside their trained data and do better with their executions for the user input.

User submits a query. Agent has been set up with all the necessary tool info (The tool includes external functions or API calls to outside world). Based on the query, Agent can make a decision to call tool. Agent will gather the necessary information in a structured format (JSON). This output is then used to execute external tool/API. The result of the tool call is then fed to LLM to add more context. LLM uses that result and all other context to produce final response.

There are a lot of use cases for this pattern.

- Personal Agent sending an email for appointment reminder

- Searching vector database for relevant documents

- Executing shell commands to look up files and data.

Conclusion

In this post, we looked at some popular agentic patterns that are currently use in various tools. You can also use them while building agentic products. There are more advanced patterns like orchestrator, multi-agent, memory management. I will cover them in the subsequent posts.

References

- Agentic Design Patterns by Antonio Gulli

- Emerging Patterns in Building GenAI Products by Martin Fowler